| SnoMotes: Arctic Robots | Under-Ice Robotics | Underwater Human-Robot Interaction |

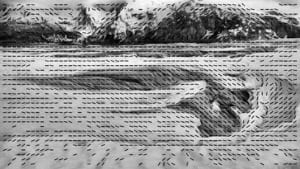

Many important scientific studies, particularly those involving climate change, require weather measurements from the ice sheets in Greenland and Antarctica. Due to the harsh and dangerous conditions of such environments, it would be advantageous to deploy a group of autonomous, mobile weather sensors, rather than accepting the expense and risk of human presence. For such a robotic system to be viable, each rover must be able to navigate to a desired location without relying on a human operator. However, glacial terrains present a variety of hazards apart from the obvious temperature extremes. Hard-packed snow dunes and softer snow drifts present steep inclines that must be overcome, vertical cracks in the ice sheet can easily swallow a small rover, and varying lighting conditions in the all-white environment make detecting these hazards difficult. As such, our research in this domain focused on developing a vision-based navigation system for arctic robots and designing, constructing, and fielding a set of prototype arctic rovers on a number of glaciers in Alaska to validate these methodologies. Techniques for amplifying subtle terrain texture were proven effective at uncovering potential hazards, and methods for extracting visual landmarks in the snow-covered terrain have enabled rovers to track their progress towards their goal. Methods were developed that allowed the rovers to create a ‘mental’ 3-D model of the terrain. With this model the robots could map efficient routes to their goal that minimized traversal through treacherous terrain. This research developed various robotic hardware development efforts before the project was retired in 2012.